The international system has never been afraid of risk, but it is running without a safety net. With the increase of geopolitical competition and the deterioration of crisis management systems, Artificial Intelligence (AI) has slowly but surely shifted to the periphery and become the focus of strategic decision-making. What was previously a state aid has turned into a fundamental element of how states gauge threats, communicate intent, and react to apparent danger. Through this, AI is transforming the speed, as well as the logic, of world politics.

AI poses a two-sided sword to policymakers. On the one hand, it gives a better situational awareness and quicker reaction to situations in a volatile world. On the other hand, it shortens decision-making time frames, magnifies worst-case scenarios, and puts the danger of making a miscalculation as a moving force. AI can support walking a thin strategic line that was already in place or increase the risk of falling.

Strategy Stabilization in a Period of Crossfire Crises.

The traditional view of strategic stability has been based on predictability, known competitors, known red lines, and escalation models that did not prevent politics. A good deal of this architecture is being washed away. The expiration of the Intermediate-Range Nuclear Forces (INF) Treaty in 2019, the multipolar competition that has been increasing, and the erosion of arms control regimes have presented novel levels of uncertainty.

Here, AI systems are being increasingly burdened with the task of making sense of large volumes of real-time information, a task that goes beyond satellites and cyber networks to include open-source intelligence. Although these tools have the potential to minimize informational blind spots, they minimize the time for reflection. However, because of the early-warning systems based on AI, dependence on this technology poses a threat of automation bias, when machine-based evaluation can be weightier than any other in times of crisis. In the process of making speed equate to credibility, restraint may seem expensive.

Russia, NATO, and the Arctic: Thin Ice and Narrow Margins.

The continuous confrontation Russia faces with NATO has resulted in the increased implementation of AI-assisted surveillance systems, target systems, and logistics systems. NATO, in its turn, has also been heading in that direction, codifying its policy with the NATO Artificial Intelligence Strategy of 2021. Although these are developments aimed at enhancing preparedness, it increases a probability of over-interpreting too little.

An example of such a dilemma is the Arctic. Due to the presence of new shipping paths and resources due to climate change, military and dual-use activity in the area has risen. The reopening of Arctic bases by Russia and increased reconnaissance by NATO have already increased the tension. In an area where there is limited communication, the surveillance systems based on AI can assume that normal movements are a purposeful direction. Every little step on thin ice – literally, and strategically, can have giant effects.

NATO’s and Russia’s Militarization of the Arctic by Anna Fleck. Lisenced under CC BY-ND 3.0

The Indo-Pacific: High-Technology Deterrence and the Perception Problem.

The AI-enablers deterrence race has taken place in the Indo-Pacific. Investment in AI-enabled maritime domain awareness, missile early-warning systems, and decision-support platforms is only an example of how China has approached the overall process of military modernization. Such efforts as Joint All-Domain Command and Control (JADC2) are aimed at the similar capabilities sought by the United States and its allies.

Ideally, the risk of surprise should be minimized due to increased transparency. In practice, it may also shift the objective of what is considered normal behavior. An AI-based system to identify abnormalities can influence the assumption of worst-case scenarios, especially when a sensitive area like the South China Sea is involved. According to false positives produced by AI-based surveillance, RAND research has cautioned that AI-based surveillance will only intensify the tension in the face of a crisis instead of limiting it. Even a normal action may seem an invitation when it is all observed.

The Middle East: Rush, Automation, and Escalation Levels.

AI is also becoming integrated into intelligence fusion, missile defense, and cyber activities in the Middle East. It has been recognized that Israel has used AI-assisted targeting and analysis systems in its operations in Gaza, specifically in 2021. Iran, in turn, has increased its reliance on drones and cyber instruments, such as the 2019 attack on the Saudi Arabian oil plant Abqaiq.

These changes demonstrate a major conflict. AI might be used to increase deterrence by depriving adversaries of the advantages of surprise, but it also decreases the action threshold. Machinery-like responses taking place at machine speed expose political leaders to the consequences of picking up the pieces afterwards. This is a stable destabilizer in a region where escalation has the potential to spread in a matter of seconds among several actors.

South Asia: Automation within a Weak Deterrence Space.

South Asia is still among the most unstable deterrence-conducive environments in the world. In part, India and Pakistan are incorporating AI in surveillance, border surveillance, and early-warning systems, as a reaction to the repetitive crises. The Pulwama attack and the following airstrikes of 2019 proved that uncertainty can cause the situation to escalate very fast.

Analysts warn that AI systems that have been taught on incomplete or biased data sources can end up interpreting normal military operations as an act of aggressive behavior. Automation is likely to increase the fire instead of serving as a stabilizing factor in such an environment.

The Accumulation of Risk and Peripheral Arenas.

The competition that is led by AI also transpires in the areas that might be considered peripheral. Digital monitoring and information activity in Venezuela has influenced government operations domestically as well as foreign manipulation.

Such actions might not be the news of the day, but they are part of the larger trend of small, AI-powered actions that can lead to strategic outcomes. Any minor action can spread in a closely interconnected system.

Artificial Intelligence Governance: Human in the Loop.

Governance and not technological determinism is the essence of the challenge that AI presents. This over-dependence on opaque systems will represent an invitation to form hair-trigger deterrence, in which speed will replace judgment. Demonstrating accountability and contextual decision-making, policy research moves more towards the human-in-the-loop or human-on-the-loop models.

Principles are provided in practice. These involve assurances of confidence procedures, which are aimed at AI transparency, crisis communication processes modified to automated processes, and joint standards in the use of AI in early-warning and command-and-control frameworks. The UN Group of Governmental Experts on lethal autonomous weapons systems is the one where ongoing discussion can serve as a starting point.

Slowing the Clock without Stopping It.

There is no denying that AI has increased the rate of deterrence and escalation in global politics. This speed would destabilize the strategic situation in an already overstretched world where two crises are overlapping. Yet AI is not destiny. It can be incorporated deliberately, transparently, and through long-term human supervision to strengthen restraint, and not to diminish it.

The challenge is to avoid giving in to the temptation of seeing AI as a mere race. The ability to plan in the era of AI will not be more and more about who moves fastest but rather the ability to be more adaptable, maintain a conversation, and ensure that human judgment remains in the cockpit.

If you want to submit your articles and/or research papers, please visit the Submissions page.

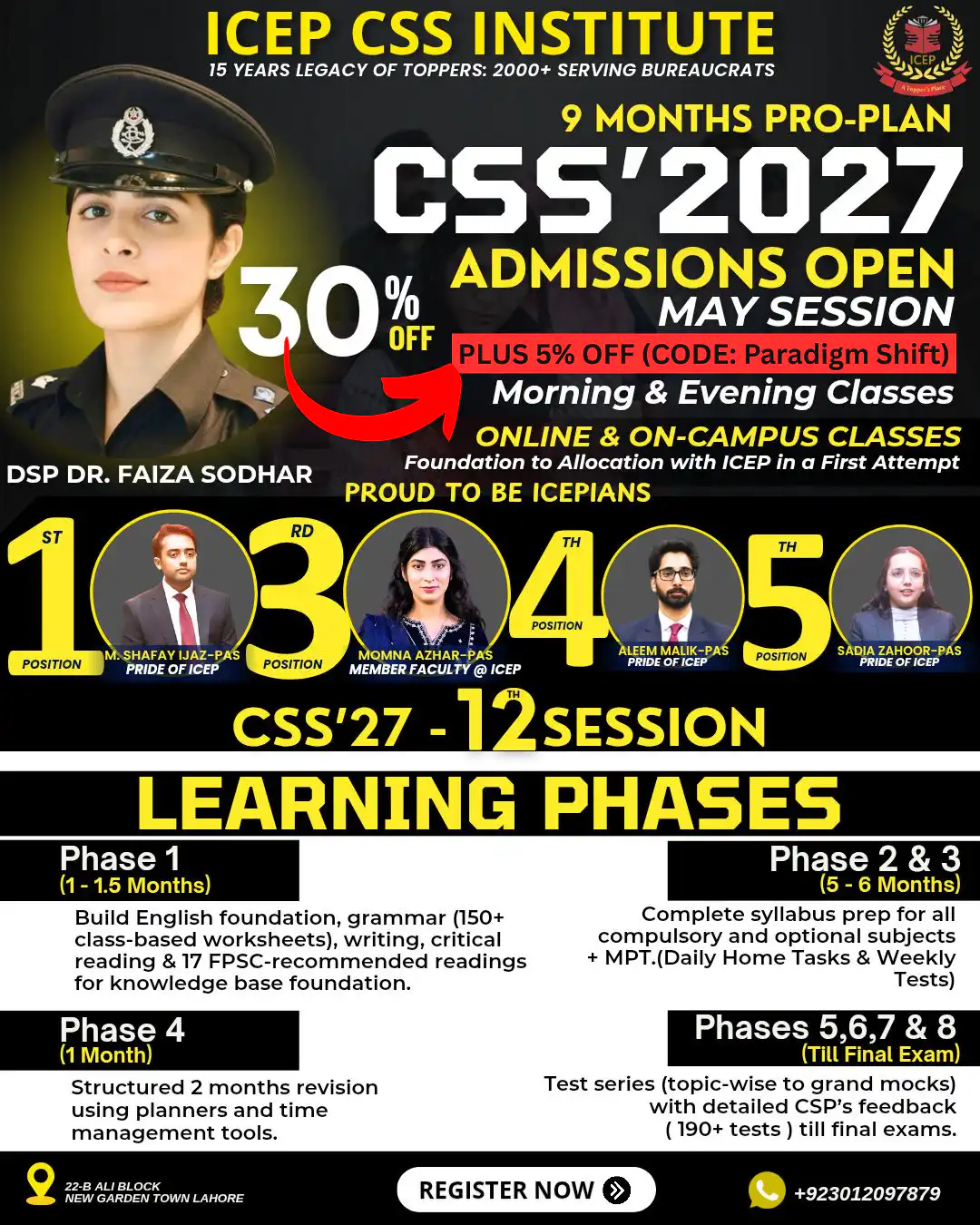

To stay updated with the latest jobs, CSS news, internships, scholarships, and current affairs articles, join our Community Forum!

The views and opinions expressed in this article/paper are the author’s own and do not necessarily reflect the editorial position of Paradigm Shift.

Pakizah Parveen holds an MPhil in International Relations, with a strong academic focus on global politics, security studies, and contemporary international affairs. She is currently serving as a Visiting Lecturer at Bahria University and FAST (National University of Computer and Emerging Sciences).